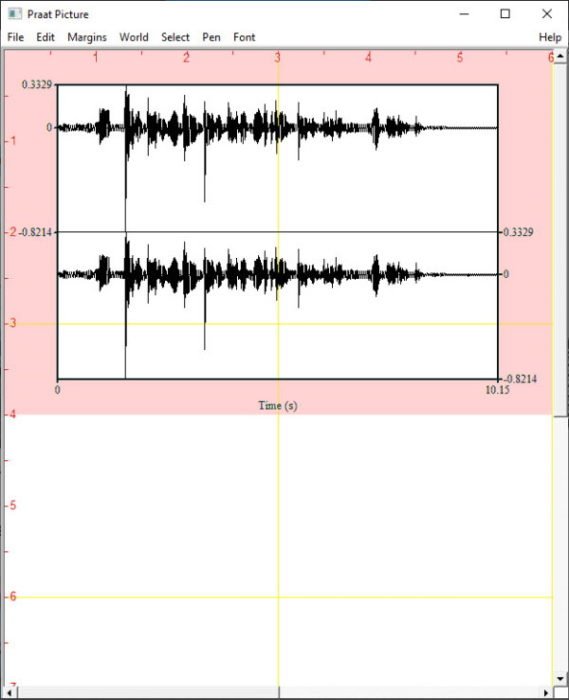

Based on acoustic measures (TF32 software 14) of the original and. We ran this as a group tutorial on a couple occasions. Using the PRAAT acoustic analysis software 13, F0 and the first (F1) and second. If you’re already using fp16 or bf16 mixed precision it. According to NVIDIA research, the majority of machine learning training workloads show the same perplexity and convergence with tf32 training as with fp32.

Again, designed for researchers at McGill. CUDA will automatically switch to using tf32 instead of fp32 where possible, assuming that the used GPU is from the Ampere series. Self-directed Praat scripting tutorial: A set of scripts and a text file designed to help keen novice Praat scripters learn the ins and outs of getting Praat to do things for you.software program titled Time-frequency analysis for 32-bit Windows (TF32: Lab. It has various "levels," the more advanced of which are not completed yet. PRAAT software generated spectrograms and pitch contour to aid acoustic. Interactive Praat scripting tutorial: Designed to be game-like in nature for research assistants I worked with and trained at McGill.Visual analog scale praat: script to administer perceptual experiments that require listeners rate audio clips using a visual analog scale.Descriptions of a selection of resources in this repository The advantage of Praat is that the scripts allow researchers to. If you see something that looks potentially useful but not in its current form, feel free to reach out and I'll see if I have something more general, or can point you in the direction of how to clean it up in a way that's more useful for you. Praat is a representative tool for speech evaluation. I also have a private collection of scripts that are just not really polished enough to put openly online. At some point I'll make the organization of this more user friendly but today is not that day. TF32 exists as something that can be quickly plugged in to exploit Tensor Core speed without much work.At the moment the organization of these scripts is project-specific, though many of the scripts themselves are easily adaptable. This finding is consistent with our previous finding on healthy speakers, 11 but also corroborates recent data from another research team. We still encourage programmers to put in effort into using those formats, since they reduce memory bandwidth and consequently permit even faster execution. Based on the report, it should be : FP32: 0.14s. As I know when I activate TF32 mode on A100 I should get performance. I do a matmul on two 10240×10240 matrices. I dont really buy it, on TV Tropes, there is a list with A ON of Critical Reasearch Failures and this not only about TF2 and Overwatch. Accelerated Computing CUDA CUDA Programming and Performance. E.g., perhaps use it for the initial iterations of a linear solver, and then use slower FP32 to polish the results.įormats such as FP16 and BFloat16 are more work, since they involve different bit layouts. So, last time i checked the Game Theory youtube channel, ive found this video Basically, he is just trying to say that its just a persona, A GAME PERSONA that is the know-it-all and arrogant jackass. So exploiting TF32 will largely be a matter of tweaking callers of these libraries to indicate whether TF32 is okay. Big linear operations are usually done via libraries anyway, e.g. On very large networks the need for mixed precision is even more evident.

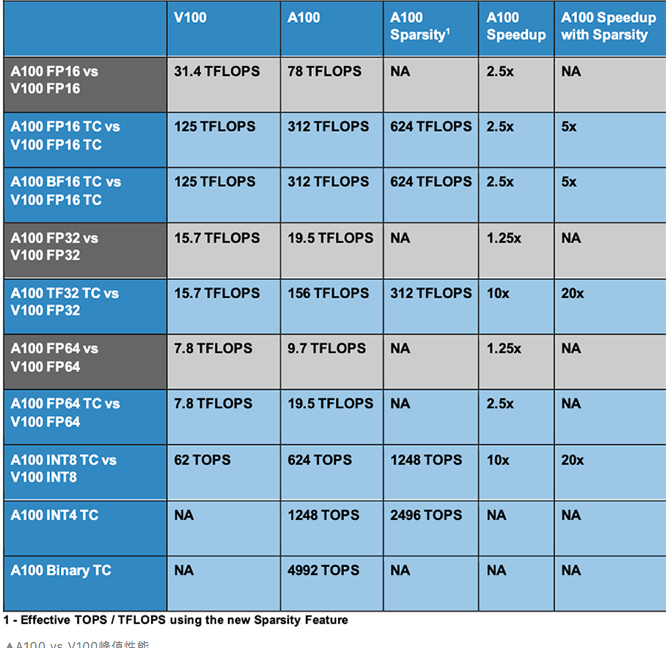

When you compare it to the FP32 performance on the Titan RTX you get speedups of 91-98 speedups. showed that mixed precision training is 1.5x to 5.5x faster over float32 on V100 GPUs, and an additional 1.3x to 2.5x faster on A100 GPUs on a variety of networks. TF32 on the 3090 (which is the default for pytorch) is very impressive. The rest of code just sees FP32 with less precision, but the same dynamic range. Purpose: This study examines accuracy and comparability of 4 trademarked acoustic analysis software packages (AASPs): Praat, WaveSurfer, TF32, and CSL by using synthesized and natural vowels. With FP32 tasks, the RTX 3090 is much faster than the Titan RTX (21-26 depending on the Titan RTX power limit). The big advantage of TF32 is that compiler support is required only at the deepest levels, i.e. It can significantly speed up computations with typically negligible loss of numerical accuracy. The rounded operands are multiplied exactly, and accumulated in normal FP32. By default PyTorch enables TF32 mode for convolutions but not matrix multiplications, and unless a network requires full float32 precision we recommend enabling this setting for matrix multiplications, too. When computing inner products with TF32, the input operands have their mantissas rounded from 23 bits to 10 bits. The advantage of TF32 is that the format is the same as FP32. Disclosure - I work for Nvidia (specifically TensorRT) and have written unit tests that can distinguish whether TF32 kicked in or not.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed